AID and traffic monitoring in tunnels with FLOW

Timely detection and response to incidents are crucial in tunnels since fire and smoke can be very dangerous there. Our

TrafficSurvey – Video post-processing platform

Not sure what suits your needs the best? Contact our solution specialists!

Not sure what suits your needs the best? Contact our solution specialists!

Bringing new ideas to life is our passion. Our devoted engineering team constantly works on future innovations and improvements.

Timely detection and response to incidents are crucial in tunnels since fire and smoke can be very dangerous there. Our

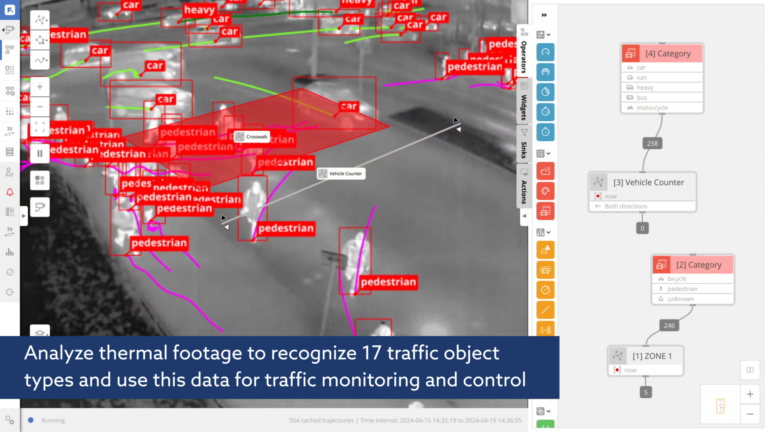

Monitor traffic and detect incidents even in complete darkness using thermal imaging technology. Gather detailed traffic data, ensure the safety

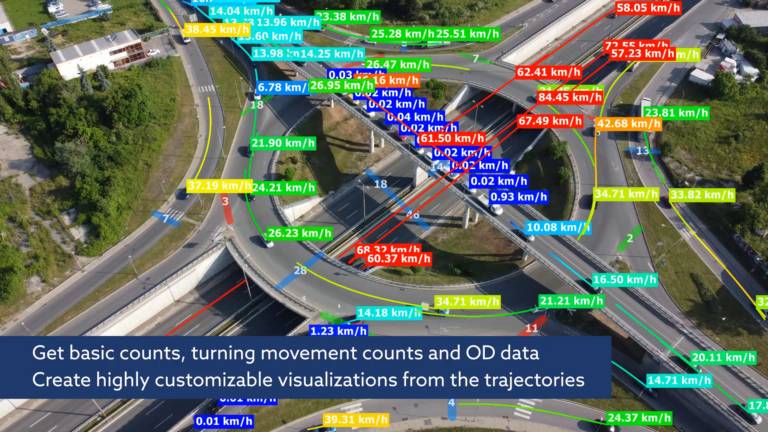

Drone traffic analysis allows you to cover large areas and can be done even for multi-level intersections. This requires a

We would like to invite you to join us one of the most important traffic events in the UK –

What are the benefits of doing a dual-perspective view #TrafficSurvey? The ground view and drone view complement each other! The

This is what the traffic situation at Coventry, CBS Arena looks like and we are exhibiting there at Traffex right

We are exhibiting at Traffex today and tomorrow. This traffic-oriented event takes place in Coventry UK and we would love

We are developing a system that will be capable of estimating traffic emissions more accurately and at scale in cooperation

Single trajectory even for more complex intersections and roundabouts! This is now possible with the FLOW system as long as

DataFromSky will be exhibiting at ITS California 26-28 August 2024 in San Francisco, USA. Get in touch with us to

Contact us | News | Help

Phone: +420 604 358 993

Email: info@datafromsky.com

Get the latest updates!

Get the latest updates about state-of-the-art FLOW video analytics and interesting traffic-related topics and events.

We use cookies to make your work with the website more pleasant. By clicking the Accept all button, you agree.

The necessary cookies are necessary for the proper functioning of the website and their use cannot be disabled. These cookies do not participate in any way in the collection of data about you.

Preferential cookies primarily serve to make your work with the website more pleasant. We use it to be able to display relevant information (eg. recommended articles) on the site.

Analytical cookies help us measure website traffic and events on the site. We then evaluate this data as a whole, ie. we do not use them for your identification.

We use marketing cookies primarily so that we can show you ads relevant to your interests that will not bother you. By enabling these cookies, you will enable us to make your work with the website more pleasant.