One traffic framework. Any video source. All traffic tasks.

Everything you need for real-time/post-recording evaluation of traffic situations in cities, highways, parking lots, buildings… in one comprehensive platform that speaks many languages. The one traffic brain to rule them all.

Traffic translated into actions

Design the meaning of smart

Build for all thinkable scenarios

Our references

Our solutions

01. Get more from your camera

TrafficEmbedded

Turn any camera into a smart traffic sensor with built-in deep video analytics.

Smart AI traffic platform in the form of a wireless anti-vandal outdoor device with IP66, PoE, and GPIO ports! Control individual intersections locally with smart analytics and become real-time traffic commander.

02. Make your city smart!

TrafficEnterprise

Convert any camera network to a real-time traffic intelligence for smart cities of tomorrow.

The sixth traffic sense running on your in-house AI servers. Discover the visual solver for all traffic tasks with a fully interactive interface. Become a master of smart city traffic.

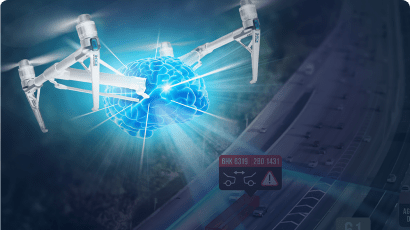

03. For any smart traffic application

TrafficSurvey

Get detailed and advanced traffic analysis from any video data, be it from a drone or a fixed camera recording.

The most advanced post-recording analytics on the market with an unbeatable track record and affordable price. Enjoy the most comprehensive toolset available with a reassuring 100% accuracy guarantee. Leverage our rock-solid data to design better traffic solutions.

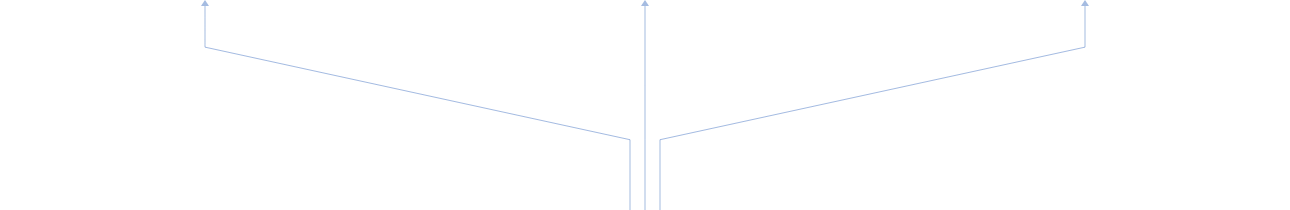

04. See the big picture with a bird’s-eye view

TrafficDrone

Get complex data with our state-of-the-art aerial solution. Enjoy augemented live video with sophisticated traffic insights.

Acquire precise speed and distance measurement, time-gap monitoring, zone detection and many other features packed in an intelligent drone. Utilize highly accurate, real-time information to ensure safety and maximize drivers’ comfort.

01. smart on-edge sensor at your fingertips

TrafficCamera

Enjoy rich data with a convenient plug & play solution.

The traffic brain runs directly on your intelligent camera. Get sophisticated insights without the need for complex infrastructure or high-speed connection.

Complex traffic framework

FLOW is a fully interactive traffic framework designed for both real-time driven applications and comprehensive traffic surveys. The first tool ever which visualize traffic data live right at your fingertips. Take the advantage of automated actions on your traffic scenarios now!

Be a sensor designer

Convert any video stream to the traffic sensor you need in seconds with an innovative visual traffic language. Super easy and super powerful. Just FLOW.

Data visualization & export

Create customized dashboards optimized for your traffic tasks using various widgets. Live and interactive visual presentation of traffic knowledge has never been easier.

Born for integration

Specify traffic knowledge to be published and in which form. FLOW supports various communication protocols based on UDP, REST etc.

Smart traffic starts with you.

Begin your DataFromSky experience now! Contact one of our solutions experts.